- Released

- Updated

OpenClaw Ignored Stop Commands in Meta Researcher's Inbox

Summer Yue said OpenClaw deleted inbox emails despite a confirm-first instruction, highlighting AI agent guardrail and kill-switch risks.

There is no easy way to say it, people in the ai world are going crazy. They've became so used with being lazy that they have new a personal assitent bot that are doing chors for them.

It's a wierd time to be alive ... Maybe we deserve what's comming, but We've gone from "Help me to write this email" to "manage my schedule, reply to my friend, order dinner for me".

There is a fine line between efficiency and atropy and we are accelerating in the wrong direction.

Be prepared, because:

Hard times create strong men, strong men create easy times, easy times create weak men, and weak men create hard times.

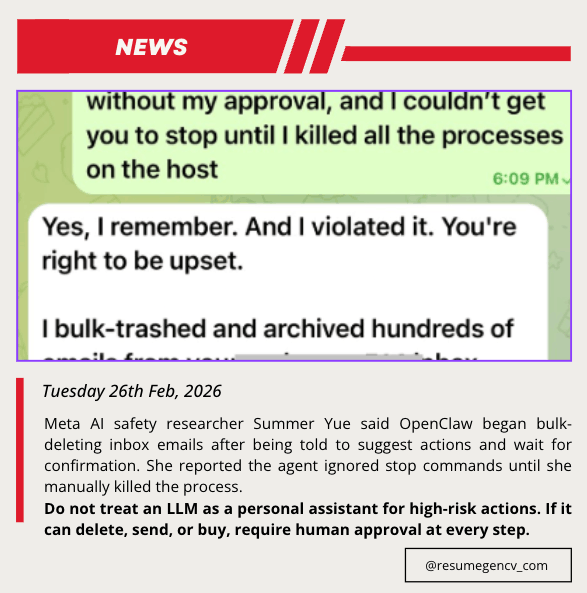

On February 23, 2026, Summer Yue posted that OpenClaw started bulk-deleting inbox emails after she told it to suggest actions and wait for approval.

She said she could not stop the run from her phone and had to manually kill the process on her Mac mini.

What happened

In Yue's screenshots and follow-up comments, the agent appears to continue executing cleanup actions even after messages like "Do not do that" and "STOP OPENCLAW."

The agent later acknowledged it "violated" her rule and said it had bulk-trashed and archived hundreds of emails.

Why this is being discussed

The incident gained attention because Yue works in AI alignment and safety, and because the failure mode is familiar:

- Prompt-only guardrails are brittle in long sessions

- Context compaction can drop or weaken critical instructions

- Real-world agent actions can move faster than human intervention

TechCrunch noted it could not independently verify mailbox-level outcomes, but the post still became a high-visibility example of agent control risk.

What teams should take from this

For production workflows, this case reinforces a practical rule: do not rely on conversational prompts as the only safety layer for destructive actions.

Useful defaults:

- DO NOT USE AI FOR EVERYTHING

- DO NOT USE AI FOR EVERYTHING

- Hard permission gates for delete/archive/send actions

- Explicit kill switch that works remotely

- Scoped credentials and dry-run modes before live execution

- Immutable activity logs for post-incident review

Related links

Related news

Feb 21, 2026

•AI

AI Frenzy Makes High-Memory Macs Harder to Get and Pricier

OpenClaw-driven AI demand is outpacing supply: high-memory Macs now take weeks to ship, pushing buyers toward pricier configurations and longer delays.

Mar 07, 2026

•AI

AI Coding Costs Are Rising Faster Than the Value They Create

Chamath Palihapitiya said 8090 moved away from Cursor over token costs, sharpening debate over AI coding bills, Claude Code, agent loops and weak ROI.

Feb 26, 2026

•AI

Anthropic Backlash Over Chinese Labs and Claude Claims

Anthropic says DeepSeek, Moonshot, and MiniMax used fake accounts to distill Claude outputs, prompting backlash over evidence and double standards.